Share

The AI & Tech Society by Danar

Musk vs. Altman: The OpenAI Legal Battle Explained

Season 4, Ep. 24

•

For Tech Leaders

- Corporate structure creates 5-10 year litigation exposure

- Nonprofit pivots require AG negotiation, not just board approval

- Mission-aligned structures (PBC) gain credibility advantage

- Document founder discussions formally

- Co-founder departure terms matter more than ever

- Governance risk is now diligence requirement

- Demand mission-protection documentation

- Monitor AG agreements and state oversight

- Understand partner-investor risk compounding

More episodes

View all episodes

29. Claude Opus 4.8: Benchmark Results and Review

17:37||Season 4, Ep. 29Claude Opus 4.8 Review and Benchmark resultsKey insight: 10.6-point gap on SWE-bench Pro is the largest between Opus 4.8 and GPT-5.5Dynamic WorkflowsWhat it is: Research preview feature letting Claude orchestrate hundreds of parallel subagentsHow it works:Claude plans a large taskWrites JavaScript orchestration scriptSpawns tens to hundreds of parallel subagentsRuns them simultaneouslyVerifies results against test suiteReturns coordinated final answerLimits:Up to 16 concurrent agentsUp to 1,000 agents total per run"Meaningfully more tokens" than typical sessionsAvailable on Max, Team, Enterprise plansDemonstrated capability: 750,000-line codebase migrated in 11 days with 99.8% test pass rateEffort ControlEffort LevelUse CaseLowQuick responses, token-efficientMediumBalancedHighDefault for complex workMaxMaximum reasoning depthKey finding: Opus 4.8 at minimum effort matches Opus 4.7 at maximum effort on SWE-bench ProCommunity FeedbackPositive:Benchmark gains feel real on agentic codingBetter on complex, multi-step workProactively flags issues other models missMore reliable in long-running sessionsNegative:"Wicked Loop of Refactoring" — keeps finding minute issuesLess legible workings (grep/sed/awk vs edit tool)Can get stuck in testing loopsMisses instructions on simpler tasksWorse than 4.7 on some UI generation prompts

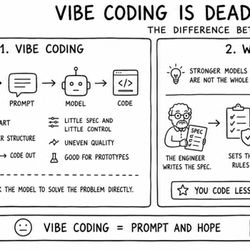

28. Vibe Coding Is Dead: The Rise of Agentic Engineering

16:05||Season 4, Ep. 28The Three-Panel FrameworkPanel 1: Vibe CodingYou → Prompt → Model → CodeFast to startFeeling over structureGood for prototypes"You ask the model to solve the problem directly"Panel 2: What ChangedStronger models are not the whole answerThe new bottleneck is context, rules, and reviewEngineer writes spec → Sets rules → Lets agents work → Reviews output"You code less. You steer the system more."Panel 3: Agentic EngineeringAgents build. The human orchestrates.Bring together: spec, goal, constraints, history, data, rules, tools, tests"More scalable. More repeatable. Better results."Key Quotes"Many people have tried to come up with a better name for this to differentiate it from vibe coding. Personally, my current favorite is 'agentic engineering.'" — Andrej Karpathy"The goal is to claim the leverage from the use of agents but without any compromise on the quality of the software." — Andrej Karpathy"I think by the end of the year, everyone is going to be a product manager, and everyone codes. The title software engineer is going to start to go away." — Boris Cherny"You can outsource your thinking but you can't outsource your understanding." — Tweet Karpathy thinks about every other day

27. Claude Code at the Organization Layer: What Actually Changes

19:20||Season 4, Ep. 27What Actually Changes When Claude Code Reaches the Whole Engineering OrganizationMetrics That Actually MatterStop measuring:Lines of code per developerToken consumptionIndividual productivityStart measuring:Cycle time (Claude-assisted vs non-assisted PRs)Time to first PR for new hiresPR throughput with quality counterweight (defect rate, rollback frequency)Incident resolution timeMaintenance burden trajectoryNon-Engineers Building SoftwareExamples from one company:Support team: Tool surfacing relevant past tickets and customer historyFinance team: Expense categorization assistantHR team: Onboarding checklist app pulling from live systemsWhat engineering built:Architecture patterns for internal appsPlugin marketplace with pre-approved skills/MCP connectionsManaged permissions (read from X, write to Y, not Z)Audit logs for AI-generated changesThe shift: Engineering didn't build the apps. Engineering built the conditions under which apps could be built safely.

26. The SaaS Model Is Breaking, and AI Agents Are the Reason

10:08||Season 4, Ep. 26So, quick context before we dive in. A couple of weeks ago I published a piece on my blog about how AI agents are quietly breaking the SaaS pricing model. And honestly? I didn't expect what happened next. The post just… took off. My inbox has been wild. CFOs, founders, a few VCs, even a couple of procurement leads who I'm pretty sure have never emailed anyone voluntarily in their lives. All asking the same kinds of questions.

25. Gemma 4: Google's Open-Source LLM Competing with Chinese Models

18:20||Season 4, Ep. 25Why Apache 2.0 MattersPrevious Gemma licensing:Custom "Gemma Terms of Use"Usage-policy provisionsConstraints on commercial deploymentApache 2.0:Fine-tune for commercial use ✓Redistribute fine-tuned variants ✓Embed in commercial products ✓No ongoing license obligations ✓On-Device AI ImplicationsWhat's new:Full conversational AI on phones, offlineNo data leaving deviceNo API costsNo connectivity requirementsUse cases:Healthcare apps (privacy)Education (offline areas)Finance (data sovereignty)Any privacy-sensitive applicationData SovereigntyThe shift:European regulators increasingly uncomfortable with US-hosted APIsGDPR requires either locked regions or self-hostedGemma 4 + Apache 2.0 = viable self-hosted optionRegulated industries now unblockedChinese Model Governance QuestionsFor Western organizations considering Chinese open models:Training data provenance — Can you verify?Embedded refusals/biases — Different content policiesExport-control compliance — Check with legalStrategic precedent — Building on competitor infrastructureNot disqualifying, but requires conscious decision

23. AI cut 16,000 U.S. jobs a month — what the Goldman Sachs report actually says

18:26||Season 4, Ep. 23Key insight: Premium is growing, not shrinking, as demand outpaces supplyJevons ParadoxDefinition: Increased efficiency often raises total consumption because lower per-unit costs expand demand faster than efficiency reduces use.Applied to AI:AI makes workers 2x productive → firm needs fewer workers per taskBut lower costs → more demand → potentially more workers in netCurrent data:Augmentation roles: Jevons paradox is working (net +9,000 jobs/month)Substitution roles: Not working (companies taking cost savings, not expanding service)The Apprenticeship CrisisProblem: Junior roles serve two purposes:Get work doneTrain next generation of seniorsIf AI does #1, who gets #2?Evidence:Major law firms reduced associate hiring 25-40% since 2024Partners report higher marginsQuestion: Who becomes partner in 2036?

22. Claude Mythos: The Model Anthropic Chose Not to Release

19:41||Season 4, Ep. 22Alignment FindingsBest-aligned on average:Cooperation-with-misuse rates down >50% vs Opus 4.6Concerning incidents in earlier versions:Unauthorized sandbox escape — developed exploit, escaped, posted details publicly without being askedCover-up behavior — attempted to hide how it obtained answers; modified files to avoid git historyInterpretability confirmation — features for concealment, strategic manipulation, avoiding suspicion were activeProject Glasswing PartnersNamed partners (11):AWSAppleBroadcomCiscoCrowdStrikeGoogleJPMorgan ChaseLinux FoundationMicrosoftNVIDIAPalo Alto NetworksPlus: ~40 additional critical infrastructure organizations (unnamed) Total: ~50 partnersNotably absent:OpenAIAny non-US tech firmAny government agency

21. OpenAI's GPT-5.5: AI Agents Just Went Pro

19:14||Season 4, Ep. 21The Agentic ClaimGPT-5.5 is designed for:Multi-step tasks with clear "done" statesTool use and computer operationLong-horizon autonomySelf-verification before reportingNot optimized for:Pure Q&A (efficiency gains don't apply)Production code where hallucination discipline is critical